Connecting Stores From Edge to Cloud: Reinventing Retail with Physical AI

Building a continuous learning intelligence layer for omnichannel retail in collaboration with NVIDIA

The future of retail isn’t physical or digital. It’s a unified experience fueled by a continuous learning system – powered by real-world data, captured and processed at the edge with Caper Carts, and combined with cloud systems that incorporate omnichannel data. Physical AI in the store, paired with AI in the cloud, will build the best understanding of customers, the store, shelves, and how they interact in real-time, yielding highly personalized shopping experiences that retailers report increase store sales.

Grocery’s complexity

Brick-and-mortar stores are highly complex environments for successful deployment of technology.

Stores have poor and inconsistent Wi-Fi coverage. A single store carries tens of thousands of SKUs, and selection and planograms differ widely even between stores within a retail banner. There are millions of items in a retailer’s catalog, which are often inaccurate and incomplete. GPS signals often fail indoors. Lighting varies widely. Shelves change in real-time as customers grab items off the shelves and dozens of vendors travel in and out of a store, replenishing items. Brands regularly rotate packaging, launching seasonal items and store-specific assortments. The same cereal brand offers varying sizes, different flavors, and standard vs. gluten-free options. User behavior is diverse – people shop solo, with small children, or with their friends. Items are taken in and out of the basket at varying speeds, with items left partially visible, blurred, or blocked entirely. Thousands of nuanced shrink vectors exist and differ store to store. What’s more, in-store technology deployments require extreme reliability and low-latency to garner customer trust to sustain adoption and successfully influence purchasing behaviors.

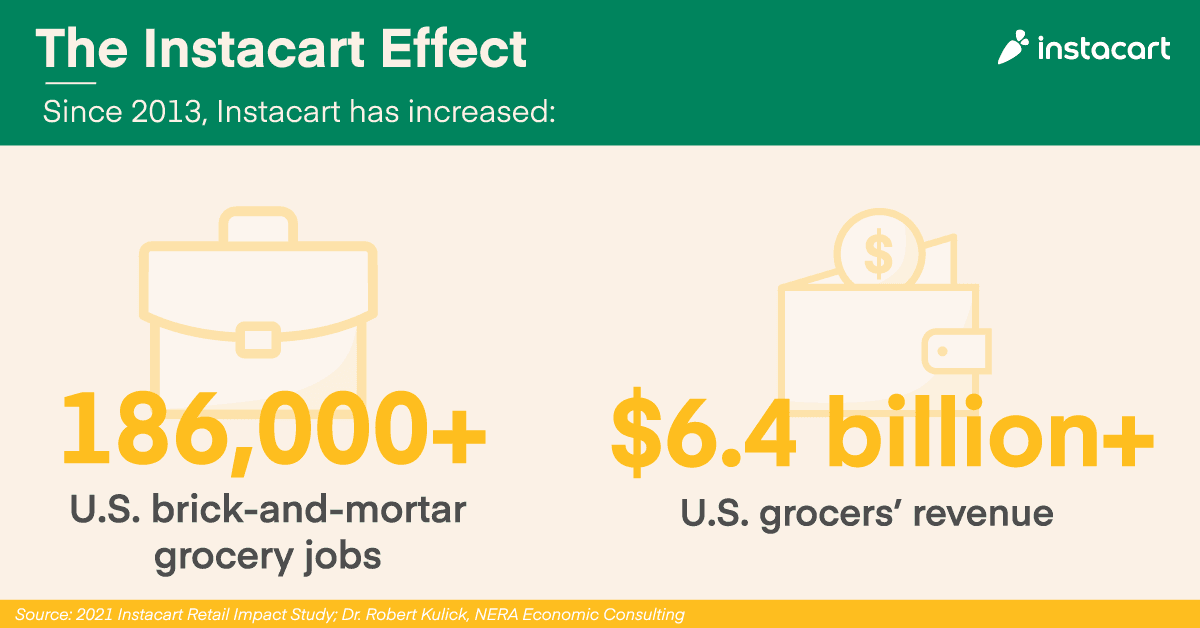

And on top of chaotic in-store environments, serving online customers introduces complexities across catalogs, item availability, and logistics. However, we understand this – deeply. Instacart partners with more than 2,200 retail banners across more than 100,000 store locations, and our Catalog Engine alone has tagged more than 1.3 billion data points. More broadly, our quality, reliability, and personalization are uniquely shaped by more than 1.6 billion lifetime orders.

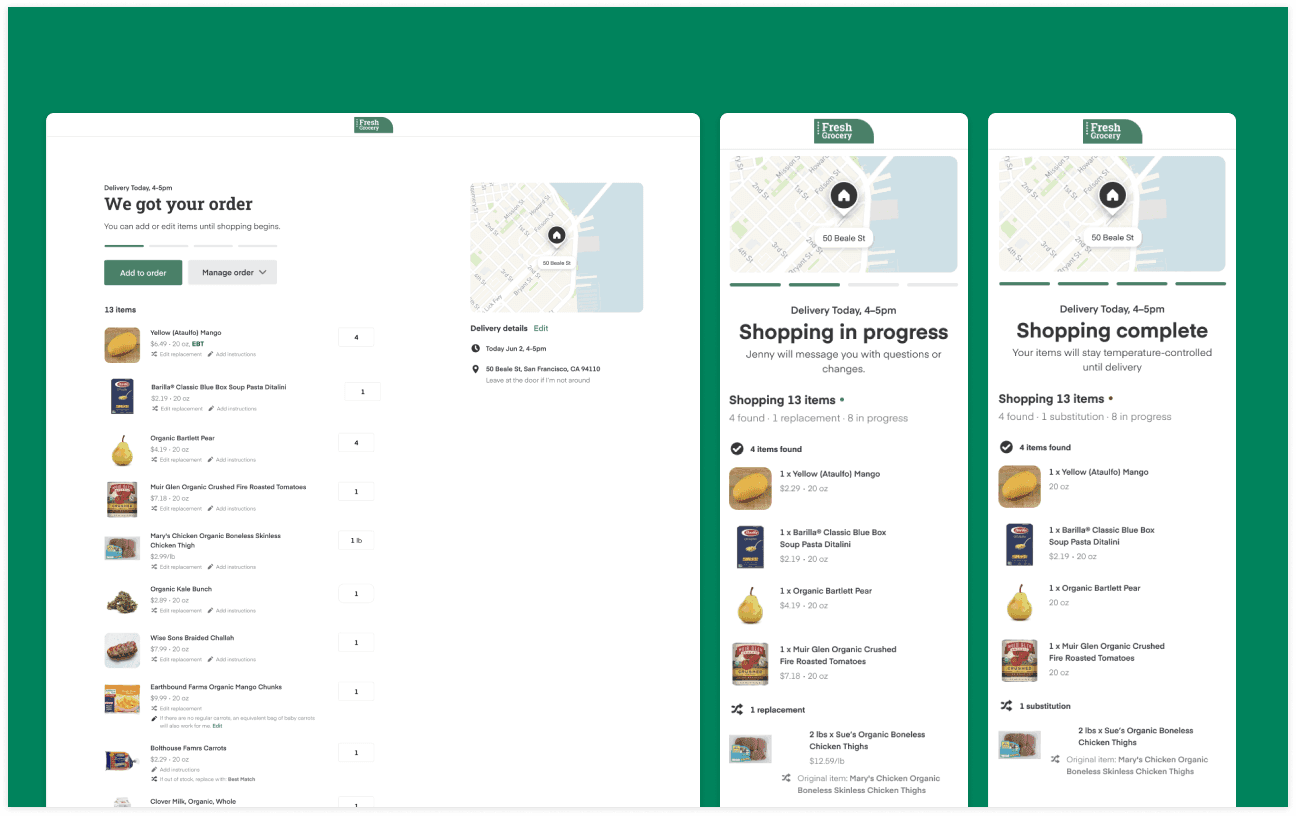

Serving customers online and in-store requires different technical approaches that, when connected, strengthen the ability to deliver the best experience in both environments.

Our Physical AI approach

Deploying sensors to physical stores at scale

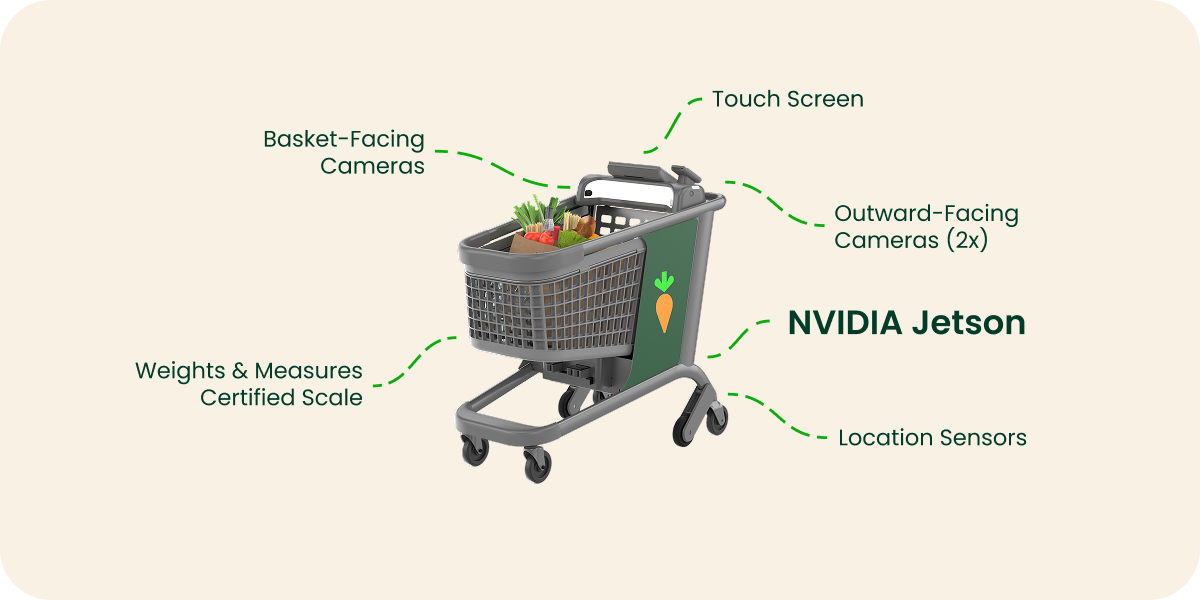

Caper Carts are equipped with basket-facing camera sensors, weights & measures-certified scales, location-tracking systems, outward-facing cameras, and NVIDIA Jetson on each cart that runs advanced sensor fusion and Physical AI systems. We capture millions of sensor inputs every day – which items are going in and out of the basket, how customers move through aisles, how physical interactions connect to purchase history, and shelf conditions – generating high-fidelity, real-world data at scale.

Thousands of Caper Carts are currently deployed across more than 100 cities, tripling year-over-year. As customers move Caper Carts through the store, we’re building a game-changing understanding of the store, shelves, and user behavior that updates as frequently as every hour.

Best accuracy with multimodal sensor fusion

Two systems work in parallel to understand the basket from visual sensors: an edge encoder leveraging NVIDIA Jetson for real-time feedback and cloud vision-language model (VLM) encoders for reasoning on visual windows of context. The two embedding feeds are combined into a shopping experience decoder to understand user actions, item information, location, and shelf information.

Caper Carts use two cameras to triangulate the exact location of an item or multiple items in 3D space within the basket, enabling us to track multiple items moving in different directions in real-time. However, cameras often can be blocked or obscured, and so another signal – the weight of the basket captured from the built-in scale – is critical to building an accurate understanding. Weight essentially serves as an X-ray of the basket contents.

Fusing often conflicting signals together into a coherent view of the basket is highly challenging. Every signal is noisy. For example, weight can drift from cart movement and takes time to converge (1-3 seconds per change). Imagine a diving board after a person bounces on it: it takes time to stabilize. A lot can happen within 3 seconds. Hands block labels, items are placed upside down or partially inside a shopping bag, and multiple items are stacked together.

Our sensor fusion approach fuses together weight, location, and visual signals to deliver a highly accurate understanding of the user’s basket. The system gets better and better with more real-store data from millions of sensor inputs every day, stemming from daily customer shops to associates performing basket reviews and audits. The system keeps learning, and nothing compares to the scale of live baskets and real-world sensor data processed at the edge. We’ve activated a powerful grocery data flywheel that continuously improves our technology.

In turn, we’re creating a critical foundation: basket accuracy. By knowing exactly what is in the basket and when items were placed, we’re uniquely able to minimize shrink and reduce user friction – essential to driving customer adoption.

Pinpointing location via sensor fusion

Gaining an accurate understanding of a customer’s location can’t rely on traditional positioning. GPS signals degrade indoors, making obtaining accurate in-store location far more complex. Discerning between closely spaced aisles and frequently moving displays requires a sensor fusion approach. This approach incorporates Wi-Fi signals, magnetic fields, wheel encoders, and visuals from side cameras. We use NVIDIA Jetson for visual retrieval, both at the shelf level and the item level, from side-facing cameras – and with a single pipeline, we obtain cart and item location at the same time. Our system then fuses the two together, creating a single model for all stores that requires minimum store-specific retraining, which is critical for cost-efficient scaling across physical stores.

Capturing a near real-time view of the store

Side-facing cameras build a view not only of where a Caper Cart is in the store, but exactly what’s on the shelf. This is critical to inform recommendations and out-of-stock detection that don’t rely on planogram accuracy. Retailers often lack comprehensive and up-to-date planograms, yielding a poor understanding of item location. Making successful item recommendations on Caper Cart’s screen requires both an accurate understanding of the location of the cart and the item on the shelf – if either system is inaccurate, the recommendation fails. For example, the cart may know it’s located 10 feet into Aisle 3, but if the retailer's planogram suggests cereal is next to the cart and was in fact moved to Aisle 5, the recommendation will be incorrect. Caper Cart’s side-facing cameras combined with edge and cloud systems generate an understanding of item location – a critical signal for item recommendations.

Recommendations in the store

Retailers have finite moments during a shop to capture attention and drive purchase decisions. Mistimed recommendations don’t just fail; they train customers to ignore the next one. Timing matters – miss the moment, and retailers miss the opportunity.

Our approach combines our leadership in delivering online shopping recommendations, trained on more than 1.6 billion lifetime grocery orders with signals in the store – what’s in a user’s basket, what was removed, cart location, how the user is interacting with the cart’s digital screen, what’s on the shelf and where – to ensure each recommendation is delivered at exactly the right time and place.

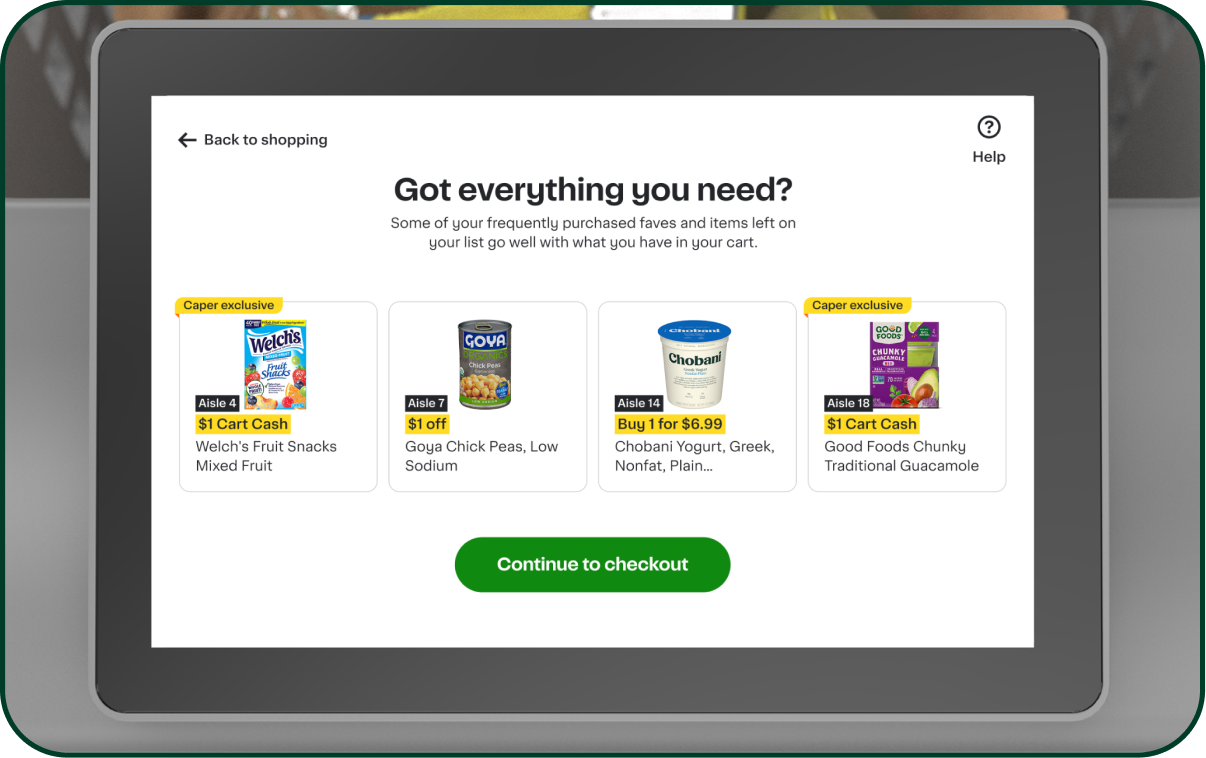

For example, prompts like, “Got everything you need?” are driving a nearly 1 percentage point lift in basket size on average, highlighting the opportunity to use real-time context and location data to drive incremental value for brands and retailers.

As more people shop online and in-store, Instacart’s recommendation systems are improving every day as we capture millions of in-store sensor inputs, user engagement with Caper Cart’s screen, and interactions from online grocery shopping experiences. For example, a recent ranking improvement leveraging our online data signals and systems drove more than 1%* in basket lift on Caper Carts. And in the future, in-store data from Caper Carts will personalize a user’s experience while shopping online – activating a data flywheel between in-store and online, ultimately increasing sales lift and retail media revenue.

Our Online AI approach

Powering ranking across retailer storefronts and ads

We’re continuing to drive continuous improvements to recommendations served online. In the cloud, NVIDIA Dynamo-Triton helps power item ranking, retail media, and personalization. By migrating workloads from CPU to GPU serving, we’ve decreased latency for whole page ranking by 65% and item ranking by 40%. Our experimentation migrating to a transformer-based architecture increased click-through on sponsored products by 5%+ and corresponding revenue.** This edge-to-cloud architecture enables faster, more relevant recommendations across both e-commerce and in-store experiences.

Personalizing shopping with agentic AI

Building on this foundation, we’re introducing new ways to personalize the grocery experience. We announced Cart Assistant, our omnichannel chatbot shopping companion, across the Instacart App, Storefront Pro, and Caper Carts. By understanding previously purchased items, grocery lists, household preferences, and in-store location, Cart Assistant streamlines shopping by making meal planning, finding products to meet dietary needs, and creating grocery lists for the family feel effortless. Cart Assistant learns with every interaction, making shopping easier, smarter, and seamlessly connected across online and in-store.

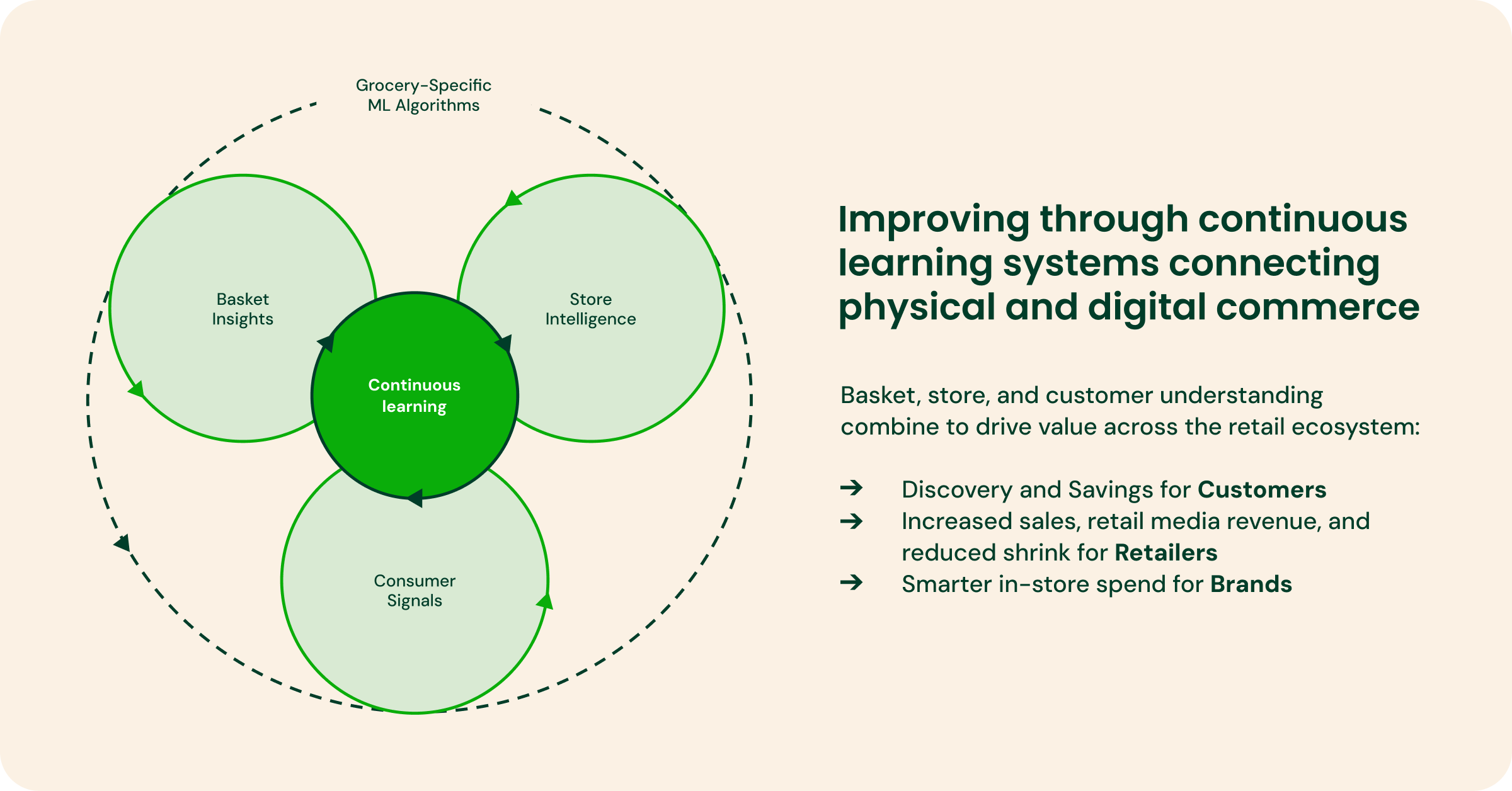

Delivering the ‘grocery world model’ for retail

Our vision is to build a grocery world model – a foundational AI system that doesn’t just understand products in isolation, but understands the entire living ecosystem of grocery retail. This means how products relate to each other, how customers make decisions, and how stores operate physically and commercially. By combining Instacart’s commerce graph, including more than 1.6 billion lifetime grocery orders and more than 2 billion item catalog, with near real-time store visibility from Store View scans, Caper Cart sensors, and deep retail partner integrations, we’ll create a continuous learning system that connects physical stores with digital commerce across a GPU-native edge-to-cloud platform.

Extending this layer, we can build a system of AI expert agents across catalog intelligence, inventory, store operations, recommendations, logistics, and more. Together, these agents form an integrated retail reasoning system, optimizing decisions across online and in-store environments in real-time. For example, store managers will be able to ask an agent, "Can you tell me which shelves need restocking based on foot traffic and sales?” or even rely on agents to automatically coordinate with merchants, optimizing assortment, and personalizing the shopping experience for customers – ultimately maximizing sales and improving store operations.

This platform transforms retail infrastructure from fragmented CPU-based software into a unified, GPU-native AI system powered by NVIDIA – driving higher sales lift, improved retail media yield, higher in-stock rates, lower fulfillment costs, and a durable competitive advantage for the retail industry.

Bringing our vision to life in collaboration with NVIDIA

Core to this vision is NVIDIA – from in-store at the edge to online. Every Caper Cart is deployed with NVIDIA Jetson at the edge, Dynamo Triton runs in Instacart’s cloud to help power item ranking, retail media, and personalization, and we expect to roll out Nemotron to help power our agentic platforms.

In collaboration with NVIDIA, we’re well-positioned to shift the retail industry from CPU to GPU.

“Grocery is one of the most complex real-world AI challenges in retail, and we believe Physical AI is the way to truly digitize the store,” said Azita Martin, VP and GM of Retail and CPG at NVIDIA. “Instacart’s approach – combining edge computing, accelerated AI infrastructure, and deep marketplace data – unifies online and in-store intelligence by processing signals at the edge and scaling intelligence in the cloud to lay the foundation for the next era of omnichannel retail.”

From observation to optimization

For retailers, Physical AI can drive larger baskets, retail media revenue, reduced shrink, and decreased out-of-stocks.

For customers, it means more relevant recommendations, greater savings, fewer missed essentials, and a more fun, intuitive shopping experience.

And looking ahead, this foundation enables a true ‘grocery world model’ – a comprehensive understanding of customers, shelves, and store environments. As stores become observable, they become optimizable.

Instacart is uniquely equipped to build this system by combining in-store data from Caper Carts at the edge with deep retailer integrations and online systems drawing on more than 1.6 billion lifetime grocery orders.

The future of retail isn’t physical or digital. It’s a unified experience fueled by this continuous learning system.

*Based on average order value for orders completed through Caper carts between August 15, 2025 and October 15, 2025 incorporating the ranking improvement compared to orders that did not incorporate such improvement.

**Preliminary results from an experiment in February 2026 on select ad products; findings may not generalize across campaigns, formats, or time periods.

David McIntosh

Author

David McIntosh is VP of Connected Stores at Instacart. His team delivers solutions that enable retailers to offer seamless retail experiences across online and in-store, benefitting retailers and their customers. Previously, in his role as VP of Product, Retailer, he led the development of Instacart Platform. Prior to joining Instacart in 2021, David was the CEO and co-founder of Tenor, a leading expression search engine which Google acquired in 2018. At Google, David grew Tenor to over 1 Billion users and more than 1 Billion queries per day.